Build a Streamlit WebUI for Your Local MLX Model

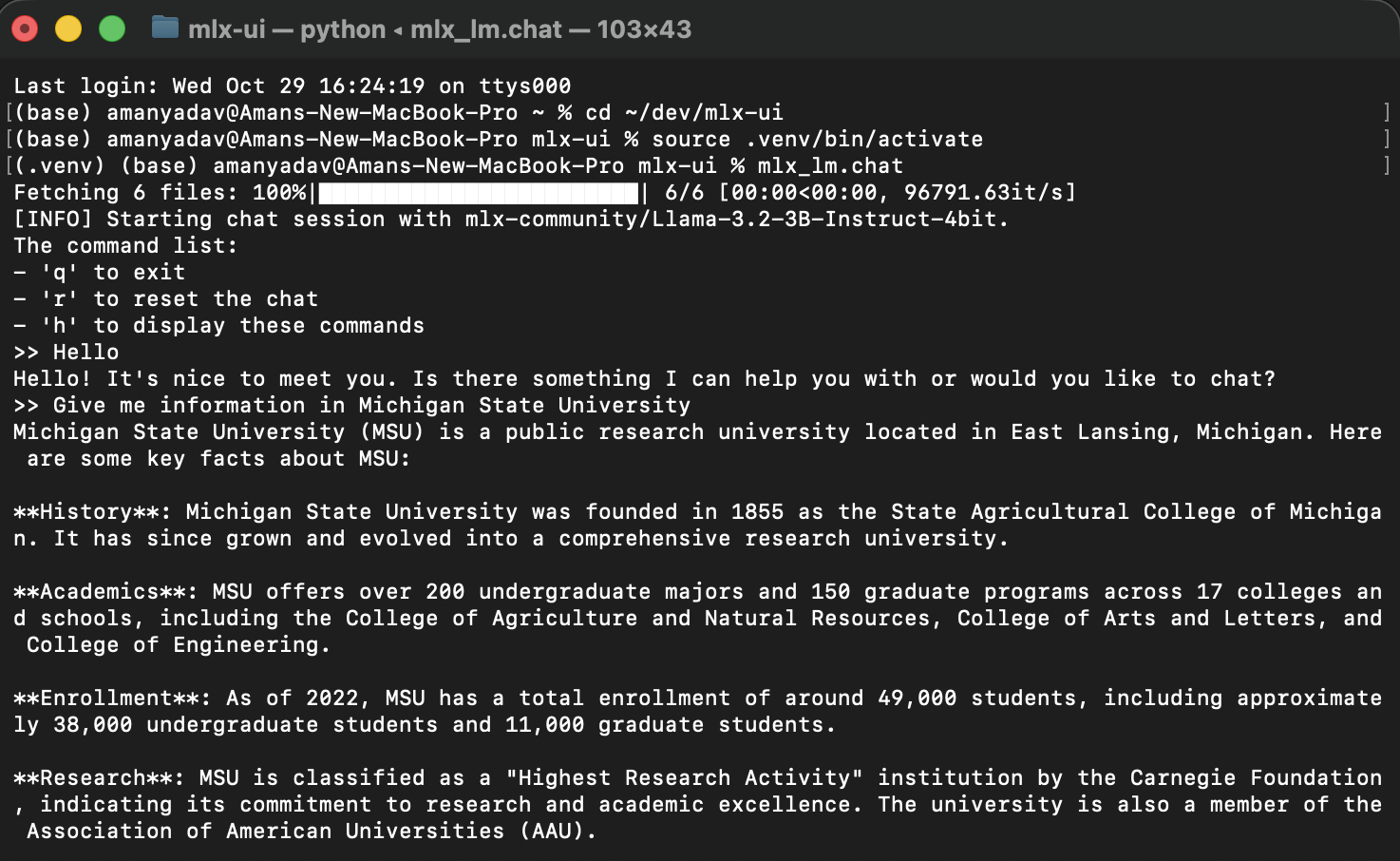

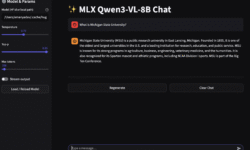

In Part 1 of this series, we installed MLX and ran a local large language model (LLM) like Mistral 7B Instruct directly on macOS.Now, let’s take it a step further and build a web-based chat interface using Streamlit — so you can interact with your local model just like ChatGPT, right from your browser. Why Streamlit? Streamlit is a powerful Python…